|

What happens here, is that the very computation of covariance matrix squares the condition number of $\mathbf X$, so especially in case when $\mathbf X$ has some nearly collinear columns (i.e.

SVD is slower but is often considered to be the preferred method because of its higher numerical accuracy.Īs you state in the question, principal component analysis (PCA) can be carried out either by SVD of the centered data matrix $\mathbf X$ ( see this Q&A thread for more details) or by the eigen-decomposition of the covariance matrix $\frac$ we can observe how SVD still performs well but EIG breaks down: Squared sing. Ii libopenblas-dev 0.1alpha2.2-3 Optimized BLAS (linear algebra) library based on GotoBLAS2 Ii libopenblas-base 0.1alpha2.2-3 Optimized BLAS (linear algebra) library based on GotoBLAS2 Loaded via a namespace (and not attached): stats graphics grDevices utils datasets methods base LC_MEASUREMENT=de_DE.UTF-8 LC_IDENTIFICATION=C LC_MESSAGES=de_DE.UTF-8 LC_PAPER=de_DE.UTF-8 LC_NAME=C LC_ADDRESS=C LC_TELEPHONE=C LC_CTYPE=de_DE.UTF-8 LC_NUMERIC=C LC_TIME=de_DE.UTF-8 LC_COLLATE=de_DE.UTF-8 LC_MONETARY=de_DE.UTF-8 The squaring of the eigenvalues of course is fast, and up to that the results of both calls are equvalent. Quick practical check: OpenBLAS's svd doesn't seem to make this distinction, on a matrix of 5e4 x 100, svd (X, nu = 0) takes on median 3.5 s, while svd (crossprod (X), nu = 0) takes 54 ms (called from R with microbenchmark). This would mean that for a high-level implementation it is better to use the SVD (1) and leave it to the BLAS to take care of which algorithm to use internally. That would mean that a good SVD implementation may internally follow (2) if it encounters suitable matrices (I don't know whether there are still better possibilities). If I understand Holmes: Fast SVD for Large-Scale Matrices correctly, your idea has been used to get a computationally fast SVD of long matrices. The chemometrics lecture where I first learned PCA used solution (2), but it was not numerically oriented, and my numerics lecture was only an introduction and didn't discuss SVD as far as I recall. If so, then why so many texbooks seem to advocate or just mention only way (1)? Maybe it is efficient and I'm missing something? Now, my question: if data $\bf X$ is a big matrix, and number of cases is (which is often a case) much greater than the number of variables, then way (1) is expected to be much slower than way (2), because way (1) applies a quite expensive algorithm (such as SVD) to a big matrix it computes and stores huge matrix $\bf U$ which we really doesn't need in our case (the PCA of variables). The decomposition may be eigen-decomposition or singular-value decomposition: with square symmetric positive semidefinite matrix, they will give the same result $\bf R=VLV'$ with eigenvalues as the diagonal of $\bf L$, and $\bf V$ as described earlier. $\bf R$ can be correlations or covariances etc., between the variables ). We can then compute component values as $ \bf C=XV=US$.Īnother way to do PCA of variables is via decomposition of $\bf R=X'X$ square matrix (i.e.

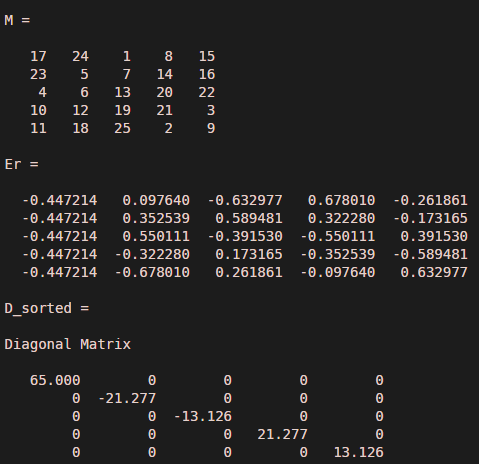

roots of the eigenvalues) occupying the main diagonal of $\bf S$, right eigenvectors $\bf V$ are the orthogonal rotation matrix of axes-variables into axes-components, left eigenvectors $\bf U$ are like $\bf V$, only for cases. That is, if we have data $\bf X$ and want to replace the variables (its columns) by principal components, we do SVD: $\bf X=USV'$, singular values (sq. Many texts on linear PCA advocate using singular-value decomposition of the casewise data. This question is about an efficient way to compute principal components. (c) Classify the quadratic form as positive definite, negative definite or indefinite. Question 1 Do (a), (b) and (c) for the quadratic forms in 1.1 and 1.2: (a) Make a change-of-variable substitution x = P y that transforms the quadratic form to one with no cross-product terms. Give answers to 3 decimals where applicable. Write/type your answers on an answer sheet and save it to your dorpbox.

Save the matlab file into your dropbox.

Use the Matlab functions to find eigenvalues and eigenvectors in Question 1 and the SVD in Question 2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed